I meet with my application development team every week to review how everyone is doing, go through active projects, discuss revenue and track the sales pipeline. To facilitate the meeting, I wrote an Angular 2 application that interfaces with exported files and APIs to pull together the information in a central, cohesive format. Angular is changing rapidly and sometimes that can create conflicts.

The Node.js package manager npm uses semantic versioning. This means some packages might specify a range or “greater than a version.” If someone pulls down the package after the dependent packages have been updated, different users may end up with different builds on their machines. I recently ran into this trying to get the application development project to build for a team member who is taking it over. We tried installing dependent packages, re-installing Node, updating npm, and several other options but the behavior on their machine just wasn’t the same as the behavior on mine.

That’s when it clicked. What is one of the main advantages of the Docker that I discuss when I present at conferences? The fact that “it works on my machine” is no longer a valid excuse. Instead, we use:

“It works on Docker.”

If you can get your container to run in Docker, it stands to reason it should run consistently on any Docker host.

The recent Docker release supports a concept called multi-stage builds. This allows you to create interim containers to perform work, then use output from those containers to build other images. This is perfect for building the sync project (and in the future means developers won’t even need Node on their machine to build it, although who doesn’t want Node?)

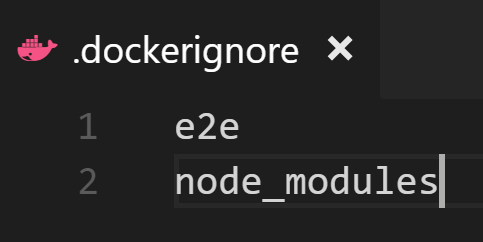

First, I created a .dockerignore file. I don’t use end-to-end testing and the build machine will install its own dependencies, so I ignored both of those folders.

Next, I got to work on the Dockerfile.

The Angular Command Line Interface (CLI) uses Node, so it made sense to start with a Node image. I am giving this container the alias build so I can reference it later. On the image, I install the specific version of the CLI I am using. Then I create a directory, copy the Angular source to that directory, install dependencies and build for production. This creates a folder named dist on the container with the assets I need to run my application.

Next, in the same Dockerfile I continue with my target image. The app is run locally for the team so I don’t need massive scale, therefore I started with the simple and extremely small busybox image.

I create a directory for the web, then copy the assets from the previous image (remember, I gave it the alias build) into the new directory. I expose the HTTP port and instruct the container to run the http daemon in the foreground (so the container keeps running) on port 80 with a home directory of www.

Next is a simple command I run from the root of the project to build the image:

docker build -t ng2appdev .

The first time takes a bit of time as it pulls the images and preps them, but eventually it successfully builds and generates an image that has everything needed to run the app. I can launch it like this:

docker run -d -p 80:80 ng2appdev

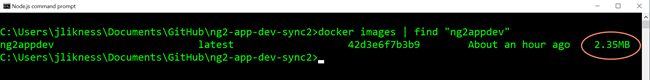

That instructs Docker to run the image I just created in “detached” mode (in the background) and expose port 80. Then I browse to localhost and the app is there, ready and waiting! The image doesn’t take up too much space, either, so it’s easy to pass around:

In fact, why even make anyone bother with the build? To improve the process we can do two things:

1. Create an image called ng2appdevbuilder that has the CLI installed. That way we don’t have to reinstall it every time or worry about it becoming deprecated in the future. It encapsulates a stable, consistent build environment.

2. On a build machine, automate it to pull down the latest application source code and assets, use the build image to build the app, then publish the result. Trigger this each time the source code is changed.

Now anyone who wants to run the latest can simply pull the most recent image and go for it. That’s the power of Docker!