I know, that title is a mouthful. But that’s exactly what it is! A team at my company, iVision, has been working on an app using the AWESM stack for almost a year now. The app is structured like this:

- A SQL Database, tracked in source control as a database project

- A repository layer based on Entity Framework

- A REST-based web services layer built as an ASP.NET Web API project

- A shared security library that allows sign-on from various sources and uses JWT to transport authentication and authorization information then rebuild the custom IPrincipal and IIdentity using a DelegatingHandler

- An ASP.NET MVC application to host templates (we used this so we can restrict access to templates based on security using the built-in Authorize attribute functionality)

- A single page Angular app built with TypeScript that is now hundreds of components and pages

- NUnit unit and integration tests, with Jasmine tests for the client

The app is a total rewrite of an existing system that uses an object-oriented database. The environment is designed to continually synchronize data from the object-oriented database to the SQL database for testing and validation purposes. This gives us “continuous migration” so there are no surprises at go-live – all operations can be verified real-time against the legacy system.

The backlog contains hundreds of items for us to migrate over. Fortunately, the free software we use to manage it gives us “at a glance” visibility into where we are at. For example, this chart of story points by state makes it immediately clear we’re just under halfway through the backlog:

You may or may not know that Visual Studio Team Services (VSTS) features a full cross-platform web-based build system that supports DevOps start to finish. We’ve been using this system to track requirements (backlog), plan sprint iterations, map team capacity, conduct daily scrums, track burn down and provide real-time transparency to the customer of our projected release date based on average velocity over time. It also serves as our source code repository, continuous integration server, and finally continual delivery.

It’s always exciting to see a good plan come together when the sprint burndown looks like this:

The customer already had an existing setup with Amazon AWS, so we are not delivering to Azure. Not a problem! The VSTS system made perfect sense given the team size is four developers and it comes with five free licenses. The new build system provides a simple link to download and install an agent on the target machine. The agent enables me to authenticate with VSTS and configure attributes about the environment to enable automated builds right from the AWS environment. As long as the machine has a path to the Internet, there are no crazy firewall rules to tweak.

Once the agent is installed (in this case as a windows service) I never have to log onto the box again – everything is managed through the web-based build interface.

At a 5,000’ level the build steps I chose are captured here:

By default the build process will synchronize the source code on the build machine with the main repository. I chose not to clean it each time which avoids pulling down the full source tree. Despite the fact the app represents hundreds of thousands of lines of SQL, TypeScript, and C# code, the entire build process takes only a few minutes.

The first step is to build the solution. This will catch any errors early on and set up the environment to make the subsequent steps run more easily. At this stage the app is built but nothing has been deployed.

The next step is a PowerShell script I wrote to backup the existing database. The configuration in VSTS is simple: I just enter the path to the script. The script itself loads some dependencies, checks to see if an existing backup exists and deletes it, then performs the backup itself.

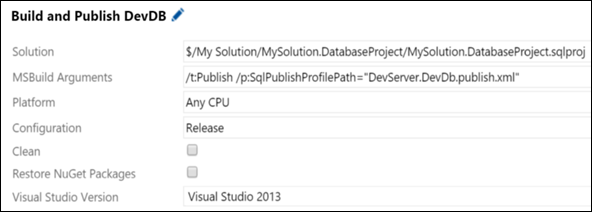

The next step is a Visual Studio build step, but instead of pointing to the solution, it points to the SQL project. The Publish target followed by a path to a publish file triggers the database upgrade. It uses the publish profile to connect to the local database, compare against the source version, then update any changes. If there are any issues the database was just backed up so we can easily restore after testing the upgrade.

The next step is to backup the internal website in case the deployment has an issue (so it is easy and fast to rollback). For this I use the “Copy and Publish Build Artifacts” block.

Finally, I can deploy the ASP.NET MVC Angular application. Because there are multiple web applications in the solution, I deploy at the project level using the “msbuild” block. The deployment will automatically apply my web.config transforms so that the correct database, services URL, etc. are configured. Notice that I pass the property to deploy on build and give it a publishing profile that I previously set up and checked into source control. I don’t need to restore NuGet packages because that was taken care of with the initial solution-wide build.

The same steps (backup, build) are repeated for the Web API project. That’s it! After the last step, the database has been upgraded, the web and service sites updated with proper configurations, and the site is ready to access. All of this can be done with the push of a single button (or run on a regular schedule) and if any issues are encountered, the backup images can be used to restore to a previously known state.

It’s so easy, I had to double-check the deployed apps the first time I did it because it built so fast and ran successfully. This is just sharing some of the process; the full cycle will include unit and integration tests as well as a “smoke screen” that can be run at the end to verify the build succeeded by accessing the running application.

Because the entire process is managed by VSTS, we have full clarity of the process from the product backlog item to the build itself, with changesets automatically linking requirements to code changes and the builds they were deployed in. If the build fails, it automatically generates a defect so the team can look into the issue and resolve it immediately. Overall this streamlines the development lifecycle and puts high quality software into the hands of our customer more frequently.

Happy DevOps!